Hello!

I'm a student at KSU doing my senior project. I really need help creating a circuit to externally power manage a GPU. The idea is that our device takes in the power from the power supply, reads data about the power draw from the GPU and allows the user to adjust the power draw on the fly via a raspberry pi 4. Unfortunately none of my professors are able to help me design the circuit for the power management aspect so I'm reaching out for help.

What I know is that the GPU is going to require a constant 12V input, therefore I know that I'm going to need to take in 12V from the power supply and maintain a 12V output. In my mind, the GPU is going to look like a variable resistor where under load, the internal resistance will drop and increase the current flow through our device. The idea is that we limit this current draw which allow us to externally limit the power consumption of the GPU.

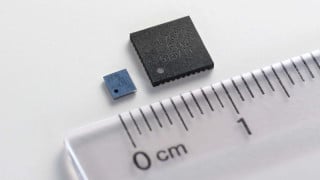

I'm working with an old RX580 as my test GPU. It has a single PCI 8-pin connector with 3 12V inputs. The card is rated at 220W which means that approximately 145W should on average be going through the 8-pin. that gives me about 48W per 12V with about 4A per wire. This should be my approximate ceiling and would want to limit that 4A down probably no more than 3A. This is not accounting for transient spikes and ideally our device would have the option of an unregulated mode where it bypasses the current limiting altogether.

I've been doing a lot of research and think the best way to go is a Buck-Boost converter as they allow a unity in/out voltage. I originally thought the LM5118 would be good but I'd have no way of controlling a current limit from the pi4. I then thought the TPS55288 as it has an I2C interface and allows for current limiting in the ranges I need. They both have development boards which is great.

TI provides prebaked schematics and basic simulations for these designs, but I'm a little overwhelmed with all the ICs and making sure I select the correct parts and we do it right the first time, if not the second time. Any kind of help and guidance would be appreciated.

I'm a student at KSU doing my senior project. I really need help creating a circuit to externally power manage a GPU. The idea is that our device takes in the power from the power supply, reads data about the power draw from the GPU and allows the user to adjust the power draw on the fly via a raspberry pi 4. Unfortunately none of my professors are able to help me design the circuit for the power management aspect so I'm reaching out for help.

What I know is that the GPU is going to require a constant 12V input, therefore I know that I'm going to need to take in 12V from the power supply and maintain a 12V output. In my mind, the GPU is going to look like a variable resistor where under load, the internal resistance will drop and increase the current flow through our device. The idea is that we limit this current draw which allow us to externally limit the power consumption of the GPU.

I'm working with an old RX580 as my test GPU. It has a single PCI 8-pin connector with 3 12V inputs. The card is rated at 220W which means that approximately 145W should on average be going through the 8-pin. that gives me about 48W per 12V with about 4A per wire. This should be my approximate ceiling and would want to limit that 4A down probably no more than 3A. This is not accounting for transient spikes and ideally our device would have the option of an unregulated mode where it bypasses the current limiting altogether.

I've been doing a lot of research and think the best way to go is a Buck-Boost converter as they allow a unity in/out voltage. I originally thought the LM5118 would be good but I'd have no way of controlling a current limit from the pi4. I then thought the TPS55288 as it has an I2C interface and allows for current limiting in the ranges I need. They both have development boards which is great.

TI provides prebaked schematics and basic simulations for these designs, but I'm a little overwhelmed with all the ICs and making sure I select the correct parts and we do it right the first time, if not the second time. Any kind of help and guidance would be appreciated.

Attachments

-

142.4 KB Views: 6

-

105.1 KB Views: 5

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin