I'm modifying a legacy design and have come across an interesting problem which my maths skills are far too rusty to derive.

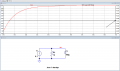

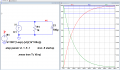

I have a subcircuit which is simply a capacitor connected in parallel with a resistor, and supplied by a constant current source.

The initial condition under consideration is with the PD across the capacitor as 0V, and the input current at 0A.

Would anyone be able to help me derive a formula to determine the time taken for the capacitor to charge, following application of a constant current I, to a defined voltage V, given R, C, and I?

I have a subcircuit which is simply a capacitor connected in parallel with a resistor, and supplied by a constant current source.

The initial condition under consideration is with the PD across the capacitor as 0V, and the input current at 0A.

Would anyone be able to help me derive a formula to determine the time taken for the capacitor to charge, following application of a constant current I, to a defined voltage V, given R, C, and I?