I'm not completely clued up when it comes to hardware, but I feel I have a sound understanding when it comes to theory.

I have a DC motor - AC generator configuration which provides a three phase output. For approximating the power output of the generator when a constant voltage is applied to the DC motor (10 V), I used several load resistances; specifically 10, 100, 1000 and 10000 ohms. What I found was quite baffling.

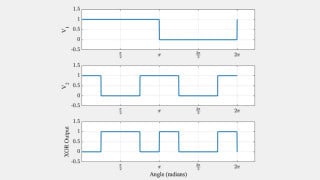

To note, I have recently discovered that only one of the three phases represents a true sinusoidal waveform whilst the other two are heavily distorted.

The phase which has the appearance of the traditional waveform was used for testing and each of the load resistances were placed across it. What I found was that as the resistances increased in value, the current through the resistance decreased, as expected, but the voltage drop across the load actually increased. Is there any feasible explanation for this because after three years in university i'm completely stumped by this finding.

Thanks in advance.

I have a DC motor - AC generator configuration which provides a three phase output. For approximating the power output of the generator when a constant voltage is applied to the DC motor (10 V), I used several load resistances; specifically 10, 100, 1000 and 10000 ohms. What I found was quite baffling.

To note, I have recently discovered that only one of the three phases represents a true sinusoidal waveform whilst the other two are heavily distorted.

The phase which has the appearance of the traditional waveform was used for testing and each of the load resistances were placed across it. What I found was that as the resistances increased in value, the current through the resistance decreased, as expected, but the voltage drop across the load actually increased. Is there any feasible explanation for this because after three years in university i'm completely stumped by this finding.

Thanks in advance.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin