In an community project, I was assigned to design an FIR filter that eliminate the 60 Hz hum and its harmonics present in a voice signal, without perceptibly degrading the quality or intelligibility of the conversation, while meeting the real-time constraints (latency and computational cost) typical of telephony and VoIP systems. and then implement it in Verilog. These are the requirements.

But my questions are the following:

- Sampling rate (Fs):

- 8 kHz (narrowband telephony, useful band ≈ 300–3400 Hz)

- 16 kHz (wideband telephony, useful band ≈ 50–7000 Hz)

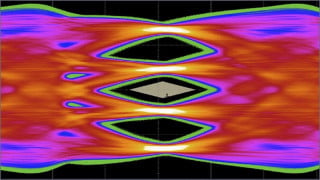

- Frequencies to suppress (notches):

- 60 Hz (main hum)

- Optional harmonics: 120 Hz, 180 Hz, 240 Hz, 300 Hz

- Attenuation at each notch:

- ≥ 50 dB at the center frequency of each harmonic

- Stopband bandwidth (BW):

- Approximately 10 Hz (example: 55–65 Hz for the 60 Hz notch)

- Adjustable: narrower if the tone is very pure, wider if there is mains frequency drift

- Passband ripple:

- ≤ 0.5–1 dB in the useful voice band outside the notches

- Maximum acceptable latency:

- ≤ 10 ms for real-time conversation

- If lower latency is required, IIR (biquad notch) is preferred over FIR

- Phase / phase distortion:

- FIR: linear phase, but requires very high order (hundreds or thousands of taps) → higher latency and computational cost

- IIR (biquad notch): non-linear phase, but very efficient with practically zero latency

- Resource usage:

- IIR biquad: ~2–4 multiplications per sample per notch (very efficient)

- Long FIR: tens to thousands of multiplications per sample (high CPU/memory cost)

But my questions are the following:

- What are the steps I should follow to design the filter?

- Are the requirements correct, taking into account the ideal behavior of the filter?

- Is the window method acceptable to solve this problem?

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin