Hi guys ,

recently i have had a need to design with a DC -DC converter 1v- 5v input to 5v output in the design ,

mainly as the battery has a usable range of 4.2v- 2.5v , with a 3400maH Lion ,

which will supply a 3v3 LDO regulator( LDO =3.5v min input) ,

the system also uses USB power as 2nd input supply to the LDO ,

The average current from LDO is 130 ma at 3v3 , with peeks at 500ma for <100ms , so a cap of 470uf would be help on the output

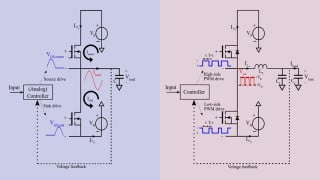

The problem is as always any DC -DC Up converter is very current inefficient when input voltage is 1- 2 volts lower ( as in my need)r than the output voltage of 5v ( see example table from manufacture )

It seem to me that it would be a lot better power design to not allow the dc converter even to be operational until such time as the input voltage falls to the same level as near as possible to LDO input shutdown level at which time the DC converter is then enabled to boost to 5v in this case

Batteries by their nature tend to be very bad when being monitored where the voltage will drop as the current changes so a bigger margin for when to shut over to the dc converter must be considered

I am contemplating a ADC monitor for battery with a cpu controlling cut over , where when activated it wont go back to the battery alone ,

has anyone done this approach , and found it to be effective or is it just extra work for little gain from those who have designed these before

Cheers

Sheldon

recently i have had a need to design with a DC -DC converter 1v- 5v input to 5v output in the design ,

mainly as the battery has a usable range of 4.2v- 2.5v , with a 3400maH Lion ,

which will supply a 3v3 LDO regulator( LDO =3.5v min input) ,

the system also uses USB power as 2nd input supply to the LDO ,

The average current from LDO is 130 ma at 3v3 , with peeks at 500ma for <100ms , so a cap of 470uf would be help on the output

The problem is as always any DC -DC Up converter is very current inefficient when input voltage is 1- 2 volts lower ( as in my need)r than the output voltage of 5v ( see example table from manufacture )

It seem to me that it would be a lot better power design to not allow the dc converter even to be operational until such time as the input voltage falls to the same level as near as possible to LDO input shutdown level at which time the DC converter is then enabled to boost to 5v in this case

Batteries by their nature tend to be very bad when being monitored where the voltage will drop as the current changes so a bigger margin for when to shut over to the dc converter must be considered

I am contemplating a ADC monitor for battery with a cpu controlling cut over , where when activated it wont go back to the battery alone ,

has anyone done this approach , and found it to be effective or is it just extra work for little gain from those who have designed these before

Cheers

Sheldon

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin