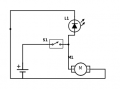

I'm missing some fundamental understanding of the current draw in the attached circuit. Power source is 2.8v (two AA batteries), LED is rated for 2.8v @ 20mA, and the load (I used a motor icon) is actually an electrical igniter that draws at least 1.3A. The issue is that the LED, connected in parallel, will not light when the switch is flipped. I assume it's because the igniter is sucking up all the current, nothing left for the LED? What phenomenon occurs that doesn't allow the paltry 20mA needed for the LED to flow to it?

And of course the final question: how do I change the circuit so that the LED does get enough current to light when the switch is thrown?

Thanks, as always.

And of course the final question: how do I change the circuit so that the LED does get enough current to light when the switch is thrown?

Thanks, as always.

Attachments

-

5.4 KB Views: 45

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin