I often see people saying things like "my code runs faster" or "your version is slower". But honestly, I don’t completely understand what makes one version faster than another especially when both seem to do the same thing.

For example, I wrote this simple string manipulation code (it works fine), but I’m interested to know :

Any explanation would be great

I wrote the code exactly the way the logic came to my mind.

For example, I wrote this simple string manipulation code (it works fine), but I’m interested to know :

- In what situations would this kind of code be considered slow?

- What exactly do people mean when they say "your code is slow" or "mine is faster"?

- Are they talking about compiler optimization, loop structure, memory access, or something else?

Any explanation would be great

I wrote the code exactly the way the logic came to my mind.

C:

/******************************************************************************

* Program: Write a function that Swap first and last words in a string. Print the result

******************************************************************************/

#include <stdio.h>

void swapping(char name[])

{

int i = 0;

int j = 0;

char temp[50];

// Find the position of the first space.

// We stop at the space because it separates the first and second words.

while (name[i] != ' ')

{

i++;

}

// Copy everything after the space (the second word)

// into temp[]. This builds the "last word" part of the final output.

while (name[i] != '\0')

{

temp[j] = name[i];

i++;

j++;

}

// Add a space to separate the two swapped words.

// Without this, both words would merge together.

temp[j++] = ' ';

// Reset i to 0 to start copying from the beginning again.

// Now we’ll copy the first word (the one before the space).

i = 0;

while (name[i] != ' ')

{

temp[j] = name[i];

i++;

j++;

}

// Add null terminator to mark end of the new string.

temp[j] = '\0';

// Print the final swapped string.

printf("%s", temp);

}

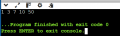

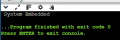

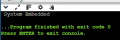

int main(void)

{

char name[] = "Embedded System";

swapping(name);

return 0;

}

Last edited:

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin