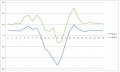

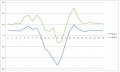

Hello, first post here so apologies if I've chosen the wrong forum. I manage a small business facility that has recently installed solar. The primary service is 1200A 208V 3PH, the solar has the maximum capacity to supply about 100kw. When we purchased the solar system I also had them install a Wattnode device with three 1200A CT's on the main service so I could get data on facility consumption. All of the data from the Utility meter, solar inverters, and the Wattnode are available to me to download in Excel format, but not real-time. I have compared the data from the Utility meter to my Wattnode data for about 3 months, and it is consistently different. For example, I ran a 24 hour data set comparison and the Utility (red line) was about 10kwh more than my Wattnode data (blue line). See graph. On this particular day, the solar was out-producing my consumption so you see the graph cross 0 to the negative (power back to the grid). We have ran multiple calibration tests on all data collection equipment and it passes. But I still have this offset in what my facility data says and what the Utility meter says. Multiple ideas have been discussed - power factor, VARs vs Watts, phase imbalance, harmonics, etc - but none seem to be a definitive answer. Maybe it's a simple math error but I don't think so. Bottom line is the solar is producing what was planned, the facility usage is generally the same as the historical data shows, but my Utility bill has only accounted for a small cost offset. Certainly not enough to pay for the system as planned.

I'd appreciate any help I can get. Thanks

Moderators Note:

Please don't show the Email on forum, it will bring the spammer robot to our forum and you, now the Email was deleted.

I'd appreciate any help I can get. Thanks

Moderators Note:

Please don't show the Email on forum, it will bring the spammer robot to our forum and you, now the Email was deleted.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin