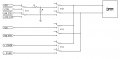

For an upcoming project I need multiple (20+?) signal relays to build a DMM tree (many pairs of inputs, on output pair).

I'm seeking preassembled boards, USB (or similar) control, with multiple DPST or DPDT relays with contacts rated 2 amps or less, as higher current contacts tend to oxidize without large currents which they will never see.

Something similar to the SainSmart 16-Channel 9-36V USB Relay Module would be great, but with signal relays.

Anyone know of any?

I'm seeking preassembled boards, USB (or similar) control, with multiple DPST or DPDT relays with contacts rated 2 amps or less, as higher current contacts tend to oxidize without large currents which they will never see.

Something similar to the SainSmart 16-Channel 9-36V USB Relay Module would be great, but with signal relays.

Anyone know of any?

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin