Hi!

First off, I'm rather new to electronics. I can't completely figure this out :

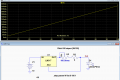

I have some LEDs and low-power Laser Diodes which I'd like to power with some circuit and not just a Lab Power Supply. I've googled my way as far as finding out that the easiest way to do this would probably be an LM317 used in a circuit similar to the one I attached. I found it in the Datasheet of an LM317T. Other Circuits used for the same purpose looked similar but with minor differences.

As far as I can see, my Output Current = Reference Voltage (1.25V) / R1. So if my Laser Diode needs 250mA, I'd use 2x 10Ω Resistors in parallel. (1.25V/5Ω=0.25A). Correct so far?

This would work, but I want to adjust my Output Current. I've seen People use 100Ω Potentiometers, but I don't need any more than maybe 15Ω, as everything higher than that would give me an Output Current of < 100mA. I don't need that. And I don't want 75% of the Potentiometers Range not to be usable. Adjusting the usable bit of range left would probably be so finicky that I'd fry the Diode.

If I look for 10Ω Potentiometers, I can only find ones for very high Power Applications, and they're usually rather expensive too.

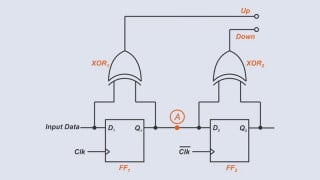

Also, why do a lot of these circuits have multiple resistors in Series (e.g. R1, R2 in the attached picture)?

There must be a better way, right? Or do I just have the wrong approach to this?

This probably isn't a very hard question, but I seem to not understand something here. Any help or ideas would be appreciated! Thank you.

First off, I'm rather new to electronics. I can't completely figure this out :

I have some LEDs and low-power Laser Diodes which I'd like to power with some circuit and not just a Lab Power Supply. I've googled my way as far as finding out that the easiest way to do this would probably be an LM317 used in a circuit similar to the one I attached. I found it in the Datasheet of an LM317T. Other Circuits used for the same purpose looked similar but with minor differences.

As far as I can see, my Output Current = Reference Voltage (1.25V) / R1. So if my Laser Diode needs 250mA, I'd use 2x 10Ω Resistors in parallel. (1.25V/5Ω=0.25A). Correct so far?

This would work, but I want to adjust my Output Current. I've seen People use 100Ω Potentiometers, but I don't need any more than maybe 15Ω, as everything higher than that would give me an Output Current of < 100mA. I don't need that. And I don't want 75% of the Potentiometers Range not to be usable. Adjusting the usable bit of range left would probably be so finicky that I'd fry the Diode.

If I look for 10Ω Potentiometers, I can only find ones for very high Power Applications, and they're usually rather expensive too.

Also, why do a lot of these circuits have multiple resistors in Series (e.g. R1, R2 in the attached picture)?

There must be a better way, right? Or do I just have the wrong approach to this?

This probably isn't a very hard question, but I seem to not understand something here. Any help or ideas would be appreciated! Thank you.

Attachments

-

24.6 KB Views: 36

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin