I've seen a lot of threads recently in which someone asks for advice on measuring current with a meter - often it's someone who doesn't realize that you must change your probe/meter connections and that you must also interrupt the circuit you intend to test. Quite often an experienced member here starts by telling them that you don't need to measure current directly, because you can just measure voltage across a resistor of a known value.

While I understand the concept, and it certainly is easier and more direct to do it that way in some cases, it seems like there are also plenty of cases where it would be much simpler and/or more accurate to use the meter's current setting.

If there's a circuit that doesn't run all of its current through a known resistance, then there's no way to measure voltage across a resistor to find current, unless you break the circuit and insert your own sense resistor. If you're at the point of breaking the circuit, why not just use your meter's current setting at that point?

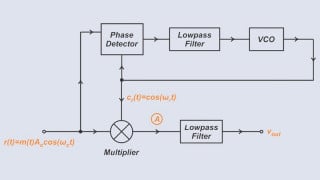

Here's a schematic for a circuit I made recently (with a lot of help here) to switch an output and limit the total current on that output in order to protect the circuit in case of a shorted wire on the output:

In order to test the short circuit protection/ current limiting, I used my meter's current setting and shorted the output to ground (the load resistor in the schematic was a dummy load for simulation, not present in the real circuit.)

At first I was going to show this schematic as an example of a circuit you'd have to use an external sense resistor for, but I finally realized that R6 would give you a really close approximation of output current (it would also include LED current.) Even with the R6 idea, if you haven't already measured the actual resistance of R6 before building a circuit around it, your voltage to current calculations after measuring R6 voltage will be somewhat loose.

Kind of rambled here, but here's my real question. Is there a reason so many people seem to really dislike using a meter's current setting? Seems to me like whatever is most convenient for any given circuit makes sense, but that's not the impression I get on these forums.

While I understand the concept, and it certainly is easier and more direct to do it that way in some cases, it seems like there are also plenty of cases where it would be much simpler and/or more accurate to use the meter's current setting.

If there's a circuit that doesn't run all of its current through a known resistance, then there's no way to measure voltage across a resistor to find current, unless you break the circuit and insert your own sense resistor. If you're at the point of breaking the circuit, why not just use your meter's current setting at that point?

Here's a schematic for a circuit I made recently (with a lot of help here) to switch an output and limit the total current on that output in order to protect the circuit in case of a shorted wire on the output:

In order to test the short circuit protection/ current limiting, I used my meter's current setting and shorted the output to ground (the load resistor in the schematic was a dummy load for simulation, not present in the real circuit.)

At first I was going to show this schematic as an example of a circuit you'd have to use an external sense resistor for, but I finally realized that R6 would give you a really close approximation of output current (it would also include LED current.) Even with the R6 idea, if you haven't already measured the actual resistance of R6 before building a circuit around it, your voltage to current calculations after measuring R6 voltage will be somewhat loose.

Kind of rambled here, but here's my real question. Is there a reason so many people seem to really dislike using a meter's current setting? Seems to me like whatever is most convenient for any given circuit makes sense, but that's not the impression I get on these forums.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin