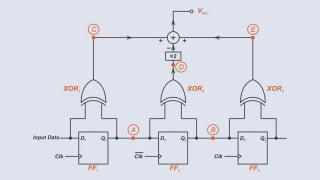

I have an analog signal(in blue), that I am required to decode to 1's and 0's using an ADC. The analog signal is manchester encoded and has a frequency of approximately 18KHz. But as can be seen from the digram the frequency is quite not the same for all the bits and varies a little bit every consective bit but remains relatively constant. My solution involved starting the ADC with an appropriate sampling frequency at the start of the data stream and taking 'n' samples over a window(4-8 bit periods), calulating the threshold/midpoint ADC value and making a decision of 0 or 1 based on a 80% majority voting of every 'n' samples. But this always doesn't work as there is a drift in the window due to the difference in frequency I mentioned above. Is there really any way or more elegant solution I can do this in software? Thanks in advance. (PS. The analog signal is the output of a differentiator and I have the flexibility to change the waveform to make it look more or less like a square waveform)

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin