I am looking for help to understand the basics of real time spectrum analyzer developed by microcontroller.

A real-time analyzer is a system that displays the frequency spectrum of an audio signal.

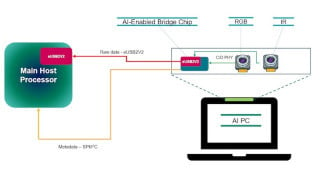

This figure gives me a good start but still I have some doubts in my mind which are not clear.

I think the microcontroller takes audio input and converts it to digital samples. Each sample store into buffer memory. The frequency of audio signal can be calculated using Fast Fourier Transform. And system displays the frequency spectrum of an audio signal.

We have three tasks ADC sampling, FFT Operation and LCD Update to perform in the system,

I am trying to understand how the microcontroller perform these three tasks in system.

I am not sure if I am understanding the correct concept.

Does microcontroller takes a one sample, calculates its frequency , and then displays it on the screen and repeat all process continuously from start?

A real-time analyzer is a system that displays the frequency spectrum of an audio signal.

This figure gives me a good start but still I have some doubts in my mind which are not clear.

I think the microcontroller takes audio input and converts it to digital samples. Each sample store into buffer memory. The frequency of audio signal can be calculated using Fast Fourier Transform. And system displays the frequency spectrum of an audio signal.

We have three tasks ADC sampling, FFT Operation and LCD Update to perform in the system,

I am trying to understand how the microcontroller perform these three tasks in system.

I am not sure if I am understanding the correct concept.

Does microcontroller takes a one sample, calculates its frequency , and then displays it on the screen and repeat all process continuously from start?

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin