The Physics of A.I.

- Thread starter nsaspook

- Start date

Scroll to continue with content

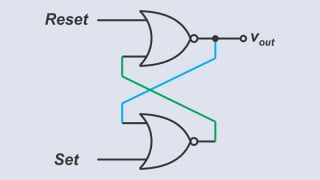

schmitt trigger

- Joined Jul 12, 2010

- 2,074

Thanks for sharing.

It is one of the most down-to-earth explanations of AI.

Having said this; I will have to view the video at least a couple of times to fully grasp all the information provided.

It is one of the most down-to-earth explanations of AI.

Having said this; I will have to view the video at least a couple of times to fully grasp all the information provided.

I know you are joking (hopefully!), but I could retort:Interesting, but how far do you trust someone who doesn't know how to pronounce "Gaussian"?

How far do you trust someone who argues ad hominem?

https://medium.com/@Elongated_musk/...rmodynamics-may-decide-ai-safety-6963bfcd3c7c

Heat, Not Halting Problems: Why Thermodynamics May Decide AI Safety

Heat, Not Halting Problems: Why Thermodynamics May Decide AI Safety

Perhaps the most far-reaching constraint is the electric grid itself. AI’s appetite for electricity is growing so fast that planners and policymakers have taken notice. In 2023, global data centers consumed roughly 240 TWh of electricity —> about 1% of world demand. By 2030, even conservative projections (pre-AI-boom) put this near ~520 TWh, and newer analyses that factor in widespread AI adoption suggest data center usage could more than double to ~945 TWh (IEA, 2025). To put that in perspective, 945 TWh is about the current annual power consumption of Japan. A significant fraction of this surge comes from AI training and inference workloads. Unlike traditional cloud services (which often sit idle or handle sporadic user queries), cutting-edge AI models tend to run on power-hungry accelerators at high utilization. When a tech firm trains a frontier model with trillions of parameters, it can draw tens of megawatts continuously for weeks. When millions of users start running AI assistants all day, the inference computations likewise add up on the grid.

In the end, the most potent “alignment” mechanism for advanced AI might not be an algorithm at all — it could be the humble power meter. An AI that might stray from human interests still cannot defy a blackout or a blown fuse. By recognizing energy, heat, and infrastructure as fundamental constraints, we add tangible safety layers to the abstract problem of AI alignment. The future of AI may well be decided not only in code and ethics, but in watts and degrees. After all, an AI can only run as hot as the laws of physics (and the utility bill) will allow. In the contest between an all-powerful AI and the second law of thermodynamics, bet on the law.

China better start powering up some more coal power plants!https://medium.com/@Elongated_musk/...rmodynamics-may-decide-ai-safety-6963bfcd3c7c

Heat, Not Halting Problems: Why Thermodynamics May Decide AI Safety

https://www.tiktok.com/@the_happy_hour__/video/7343780014938164512I know you are joking (hopefully!), but I could retort:

How far do you trust someone who argues ad hominem?

The notion that all Ai models are related to Gaussian distribution is incorrect.

If you set parameters to resolve an infinitely wide field then you get a Gaussian distortion.

A huge model with a billion parameters is very small compared to ∞ infinity

The gravitational lens also called curvature of same-time 1916 Albert Einstein is not a universal

relationship to Ai Models or how they work. A metaphor that is sometimes used is "lensing"

There does exist a legitimate model in QFT that is configured in that way.

[2506.04335] Emergent curved space and gravitational lensing in quantum materials

The Standard Model Quantum Field Theory is the most experimentally supported QFT ever constructed.

If you set parameters to resolve an infinitely wide field then you get a Gaussian distortion.

A huge model with a billion parameters is very small compared to ∞ infinity

The gravitational lens also called curvature of same-time 1916 Albert Einstein is not a universal

relationship to Ai Models or how they work. A metaphor that is sometimes used is "lensing"

There does exist a legitimate model in QFT that is configured in that way.

[2506.04335] Emergent curved space and gravitational lensing in quantum materials

The Standard Model Quantum Field Theory is the most experimentally supported QFT ever constructed.

Last edited:

The video referred specifically to neural networks and clarified that they approach a Gaussian distribution as they evolve but practical networks deviate in ways that must be accounted for. Gravitational lensing was not discussed, the general idea of QFT was.The notion that all Ai models are related to Gaussian distribution is incorrect.

If you set parameters to resolve an infinitely wide field then you get a Gaussian distortion.

A huge model with a billion parameters is very small compared to ∞ infinity

The gravitational lens also called curvature of same-time 1916 Albert Einstein is not a universal

relationship to Ai Models or how they work. A metaphor that is sometimes used is "lensing"

There does exist a legitimate model in QFT that is configured in that way.

[2506.04335] Emergent curved space and gravitational lensing in quantum materials

The Standard Model Quantum Field Theory is the most experimentally supported QFT ever constructed.

Your comment is confusing and seems very loosely coupled to the content of the video.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin