Hi. If I have input voltage of 14.5 V. input current of 1 A and resistor of 0.25 ohm, how many power rating of resistor that needed so that the resistor won't burst in flame? Thx for advance.

Resistor Power rating

- Thread starter Tan Kwan Yean

- Start date

Scroll to continue with content

Lestraveled

- Joined May 19, 2014

- 1,946

14.5 volts divided by .25 ohms equals 58 amps. You say your input current is only 1 amp. So, your supply can not supply 58 amps. The .25 ohm resistor will be about the same as a dead short.

Edit: I assumed the .25 ohm resistor was the load. My mistake.

Edit: I assumed the .25 ohm resistor was the load. My mistake.

I assumed the resistor was in series with whatever the REAL load is, not the load itself. But your assumption may be the right one.14.5 volts divided by .25 ohms equals 58 amps. You say your input current is only 1 amp. So, your supply can not supply 58 amps. The .25 ohm resistor will be about the same as a dead short.

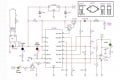

The 0.25 ohm resistor is the current limiter for a charger circuit. I have a output from buck converter of 14.5 V and 10 A. This output is split into 10 charging circuit as shown in the attachment. Ideally, after split, each charging circuit will has 14.5 V and 1 A as input. How much should the power rating of resistor Rs?

Attachments

-

55.8 KB Views: 18

john*michael

- Joined Sep 18, 2014

- 43

Looks like the current limiter is set up so that the voltage across the sense resistor is 0.25 volts. Since you are using a 0.25-ohm resistor, you will have a maximum of 1 amp going to the battery, so the power is 0.25V x 1 amp or 0.25W.

Lestraveled

- Joined May 19, 2014

- 1,946

What prevents each battery from drawing more than 1 amp?

Apparently 9 more circuits as in post #6 but I am still confused by the ESL difficulties.What prevents each battery from drawing more than 1 amp?

Lestraveled

- Joined May 19, 2014

- 1,946

Tan Kwan Yean

With only .25 ohms in series with your power supply and battery, small changes in battery voltage with cause large changes in charge current. May I suggest that you consider a higher power supply voltage and a higher resistance series resistor. The higher the voltage and resistances are, the less the charge current will change as the battery voltage changes. Calculate current at max and min battery voltage and you will see that this is true.

With only .25 ohms in series with your power supply and battery, small changes in battery voltage with cause large changes in charge current. May I suggest that you consider a higher power supply voltage and a higher resistance series resistor. The higher the voltage and resistances are, the less the charge current will change as the battery voltage changes. Calculate current at max and min battery voltage and you will see that this is true.

@Lestraveled I will try to do that and what is formula to do that?By the way, Rs = 0.25 / I max according to uc2906 data sheet.

Lestraveled

- Joined May 19, 2014

- 1,946

OK

Assume a battery low (discharged) voltage of 12.0V and a high (charged) voltage of 14.4V.

Case #1, power supply = 14.5V and Rseries = .25 ohms.

Vbat = 12.0V, Then, I = (14.5 - 12)/.25 = 10 amps

Vbat = 14.4V, Then, I = (14.5 - 14.4)/.25 = .4 amps

Case #2 power supply = 18V and Rseries = 6 ohms

Vbat - 12.0V, Then, I = (18 - 12)/6 = 1 amp

Vbat = 14.4V, Then, I = (18 - 14.4)/6 = .6 amps

OK, do you see the what I am talking about?

Assume a battery low (discharged) voltage of 12.0V and a high (charged) voltage of 14.4V.

Case #1, power supply = 14.5V and Rseries = .25 ohms.

Vbat = 12.0V, Then, I = (14.5 - 12)/.25 = 10 amps

Vbat = 14.4V, Then, I = (14.5 - 14.4)/.25 = .4 amps

Case #2 power supply = 18V and Rseries = 6 ohms

Vbat - 12.0V, Then, I = (18 - 12)/6 = 1 amp

Vbat = 14.4V, Then, I = (18 - 14.4)/6 = .6 amps

OK, do you see the what I am talking about?

Ok. Now I get it. Thx for the help and explanation.

john*michael

- Joined Sep 18, 2014

- 43

The UC3906 senses current through Rs in your charging circuit. The device uses the voltage drop across this resistor as feedback to set the maximum current that the charger will provide to the battery. The voltage across this resistor is compared to 0.25 volts and limited to this value by adjusting the base drive for the Darlington pair. So the voltage across the resistor is always 0.25 volts or less.

Your circuit has a 20 volt power supply. The sense current will have .25 volts at 1 amp or .25 watts, as explained above. The BUX10 will have the remaining voltage drop at 1 amp. If you are charging a 12-volt battery, and assuming a 0.7 volt drop across the MUR1540, you will have 20 -.25 (from Rs) - .7 (from MUR1540) -12 (from the battery) or a little over 7 volts from collector-to-emitter of the BUX10. So the BUX10 be the part dissipating your power, in this case 7 volts x 1 amp or 7 watts. It will need to be attached to a heat sink surface.

Your circuit has a 20 volt power supply. The sense current will have .25 volts at 1 amp or .25 watts, as explained above. The BUX10 will have the remaining voltage drop at 1 amp. If you are charging a 12-volt battery, and assuming a 0.7 volt drop across the MUR1540, you will have 20 -.25 (from Rs) - .7 (from MUR1540) -12 (from the battery) or a little over 7 volts from collector-to-emitter of the BUX10. So the BUX10 be the part dissipating your power, in this case 7 volts x 1 amp or 7 watts. It will need to be attached to a heat sink surface.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin