New to the forum, so for my school project I have two 40 khz sine wave input,both signals will be repeatedly on for 3ms then off for 3 seconds, there will be a very small time delay(might be in scale 1us). And my object is to find this time difference.

I have been looking in some different ways :

1. use rectifier to rectify inputs, and deliver the square wave to Arduino, then Find the time different by comparing events of changing edges.

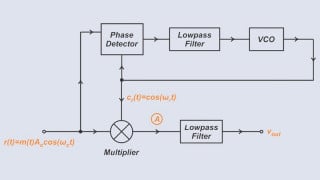

2. someone told me to use a phase detector, where the output contain the information of the two inputs. I have never used them before, I wonder how should i use the output from phase detector in my micro controller.

3. Which way will give me more precise result?

I have been looking in some different ways :

1. use rectifier to rectify inputs, and deliver the square wave to Arduino, then Find the time different by comparing events of changing edges.

2. someone told me to use a phase detector, where the output contain the information of the two inputs. I have never used them before, I wonder how should i use the output from phase detector in my micro controller.

3. Which way will give me more precise result?

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin