A 6502 is not a brain but it is several orders of magnitude less complex than a brain. The blackbox approach to analyzing it using fails if we try to properly reverse engineer an extremely simple deterministic computer chip using the methods of neuroscience.

http://www.economist.com/news/scien...bl/n/20170119n/owned/n/n/nwl/n/n/NA/8643534/n

http://www.economist.com/news/scien...bl/n/20170119n/owned/n/n/nwl/n/n/NA/8643534/n

http://journals.plos.org/ploscompbiol/article?id=10.1371/journal.pcbi.1005268Gaël Varoquaux, a machine-learning specialist at the Institute for Research in Computer Science and Automation, in France, says that the 6502 in particular is about as different from a brain as it could be. Such primitive chips process information sequentially. Brains (and modern microprocessors) juggle many computations at once. And he points out that, for all its limitations, neuroscience has made real progress. The ins-and-outs of parts of the visual system, for instance, such as how it categorises features like lines and shapes, are reasonably well understood.

Dr Jonas acknowledges both points. “I don’t want to claim that neuroscience has accomplished nothing!” he says. Instead, he goes back to the analogy with the Human Genome Project. The data it generated, and the reams of extra information churned out by modern, far more capable gene-sequencers, have certainly been useful. But hype-fuelled hopes of an immediate leap in understanding were dashed. Obtaining data is one thing. Working out what they are saying is another.

Here we will try to understand a known artificial system, a classical microprocessor by applying data analysis methods from neuroscience. We want to see what kind of an understanding would emerge from using a broad range of currently popular data analysis methods. To do so, we will analyze the connections on the chip, the effects of destroying individual transistors, single-unit tuning curves, the joint statistics across transistors, local activities, estimated connections, and whole-device recordings. For each of these, we will use standard techniques that are popular in the field of neuroscience. We find that many measures are surprisingly similar between the brain and the processor but that our results do not lead to a meaningful understanding of the processor. The analysis can not produce the hierarchical understanding of information processing that most students of electrical engineering obtain. It suggests that the availability of unlimited data, as we have for the processor, is in no way sufficient to allow a real understanding of the brain. We argue that when studying a complex system like the brain, methods and approaches should first be sanity checked on complex man-made systems that share many of the violations of modeling assumptions of the real system.

An engineered model organism

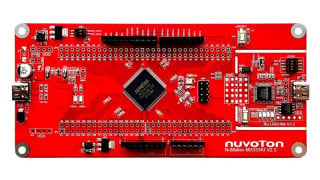

10] for a comprehensive review). The Visual6502 team reverse-engineered the 6507 from physical integrated circuits [11] by chemically removing the epoxy layer and imaging the silicon die with a light microscope. Much like with current connectomics work [12, 13], a combination of algorithmic and human-based approaches were used to label regions, identify circuit structures, and ultimately produce a transistor-accurate netlist (a full connectome) for this processor consisting of 3510 enhancement-mode transistors. Several other support chips, including the Television Interface Adaptor (TIA) were also reverse-engineered and a cycle-accurate simulator was written that can simulate the voltage on every wire and the state of every transistor. The reconstruction has sufficient fidelity to run a variety of classic video games, which we will detail below. The simulation generates roughly 1.5GB/sec of state information, allowing a real big-data analysis of the processor.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin