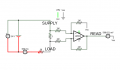

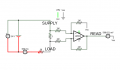

I have a drawer full of standalone voltmeter displays which I would like to repurpose into simple ammeters. The circuit I came up with to sense the current is basically a differential amp with a 1-ohm resistor between the inputs, all powered by a 9V battery. (The resistor is of the chunky 100W variety which is typically used in doorbells.) The general idea is by using that particular resistor value, I can take advantage of Ohm's law in such a way that the voltage output will be more or less precisely the number of amps being pulled by the external circuit.

Looks good on paper. But I do wonder if even a single ohm might disrupt the operation of certain circuits? Also, I am planning to use the rugged LM324 (which I have plenty of, and works well in single-supply configuration) although I did notice during simulation that it bottoms out as a current-sensor below 34mA or so (ie. 34mV at the output). That shouldn't be too much of an issue because I can't imagine I'd need to measure such a small current (not to mention that the last digit of the display corresponds to tens of millivolts) but it does make me wonder if I should be using a better quality opamp (and if so, which might be best)?

Finally, are there any other issues with the design that stand out?

Looks good on paper. But I do wonder if even a single ohm might disrupt the operation of certain circuits? Also, I am planning to use the rugged LM324 (which I have plenty of, and works well in single-supply configuration) although I did notice during simulation that it bottoms out as a current-sensor below 34mA or so (ie. 34mV at the output). That shouldn't be too much of an issue because I can't imagine I'd need to measure such a small current (not to mention that the last digit of the display corresponds to tens of millivolts) but it does make me wonder if I should be using a better quality opamp (and if so, which might be best)?

Finally, are there any other issues with the design that stand out?

Last edited:

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin