Hello All,

If I had a simple circuit with a 12V battery and an LED that drops 2V and needs 20mA, therefore I'd need a resistor to drop 10V and set the current at 20mA, so I'd do R = 10/0.020 = 500 Ohms.

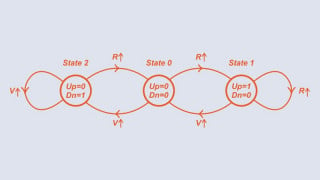

1. Is it possible to 'use up' the voltage before it reaches the LED. For example if you placed the resistor before the LED, and wanted it to drop the whole 12V, then if I used a 600 Ohm resistor, would it drop 12V and then leave nothing for the LED?

2. If I wanted to 40mA to flow through the LED, then I could use R = 10/0.040 = 250 Ohm resistor. Looking at the voltage-current curve for an LED, to increase the current through it I'd also have to increase the voltage (albeit slightly), but I'd still only have 2V after the resistor. How can the LED have a current of 40mA, when it only has 2V available after the resistor, it goes against the voltage-current curve?

If anyone could help clear things up a bit it would be appreciated.

Thanks.

If I had a simple circuit with a 12V battery and an LED that drops 2V and needs 20mA, therefore I'd need a resistor to drop 10V and set the current at 20mA, so I'd do R = 10/0.020 = 500 Ohms.

1. Is it possible to 'use up' the voltage before it reaches the LED. For example if you placed the resistor before the LED, and wanted it to drop the whole 12V, then if I used a 600 Ohm resistor, would it drop 12V and then leave nothing for the LED?

2. If I wanted to 40mA to flow through the LED, then I could use R = 10/0.040 = 250 Ohm resistor. Looking at the voltage-current curve for an LED, to increase the current through it I'd also have to increase the voltage (albeit slightly), but I'd still only have 2V after the resistor. How can the LED have a current of 40mA, when it only has 2V available after the resistor, it goes against the voltage-current curve?

If anyone could help clear things up a bit it would be appreciated.

Thanks.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin