Hi,

I got some text from internet about password entropy:

Somebody please guide me.

Zulfi.

I got some text from internet about password entropy:

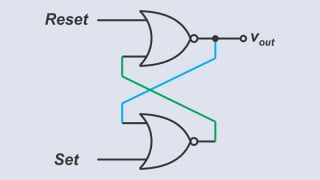

For example if we have a 3 bit password then possible number of guesses is 8. So 3 is the entropy?It is usual in the computer industry to specify password strength in terms of information entropy which is measured in bits and is a concept from information theory. Instead of the number of guesses needed to find the password with certainty, the base-2 logarithm of that number is given, which is commonly referred to as the number of "entropy bits" in a password, though this is not exactly the same quantity as information entropy

Somebody please guide me.

Zulfi.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin