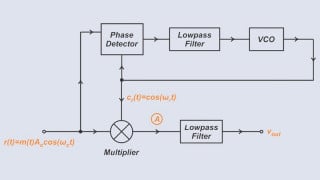

I want to simulate in LT Spice a decoded based D/A converter like the one shown in the last image.

So I decided to use both NMOS and PMOS like in the first three images (green is for output). So, in the last image, if b3b2b1 = 000, then the last row has all 1s, and thus the output voltage is 0V. In my case, I used PMOS that conduct when 0 are applied on their gates.

And the voltage reference is 8V. So the voltage change when one LSB changes should be 1V.

So for 000 I should obtain 0V, and for 111 I should obtain 7V.

But when I simulate, for 000 I obtain 1.2V, and for 111, I obtain 1.4V.

(for logical 1, I use 5V, and for logical 0, I use 0V).

What is happening? My experience with analog design is very bad.

When the transistors conduct, they have Rds = 0.002 ohm. The used convention is b1b2b3, where b1 is MSB.

So I decided to use both NMOS and PMOS like in the first three images (green is for output). So, in the last image, if b3b2b1 = 000, then the last row has all 1s, and thus the output voltage is 0V. In my case, I used PMOS that conduct when 0 are applied on their gates.

And the voltage reference is 8V. So the voltage change when one LSB changes should be 1V.

So for 000 I should obtain 0V, and for 111 I should obtain 7V.

But when I simulate, for 000 I obtain 1.2V, and for 111, I obtain 1.4V.

(for logical 1, I use 5V, and for logical 0, I use 0V).

What is happening? My experience with analog design is very bad.

When the transistors conduct, they have Rds = 0.002 ohm. The used convention is b1b2b3, where b1 is MSB.

Attachments

-

24.4 KB Views: 5

-

24.8 KB Views: 5

-

24 KB Views: 6

-

15.2 KB Views: 5

Last edited:

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin