Hey, I have a solar panel that can deliver up to 6V / 250 mA on a clear day. As the sun wanes, of course, the voltage drops off. I have my output - a small 5V LiPo battery charger - connected to an LM7805 so the charger should never get more than 5V. The circuit seems to work well enough in this case: my LiPo batteries get charged, eventually, depending on how much sun we have.

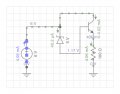

My question: how would I design a circuit that doesn't power the LM7805 until the voltage reaches at least 5V? I read through Hill & Horowitz (Art of Electronics), and Practical Electronics for Inventors, and simulated a circuit using zener diodes, but if the voltage goes below the zener cutoff level, it simply routes to the parallel circuit, bypassing the zener, so the load would still see any voltage < 5V. At a low enough voltage, of course, the LM7805 doesn't conduct, so it kind of works out, but I'd like to know how to implement this.

My question: how would I design a circuit that doesn't power the LM7805 until the voltage reaches at least 5V? I read through Hill & Horowitz (Art of Electronics), and Practical Electronics for Inventors, and simulated a circuit using zener diodes, but if the voltage goes below the zener cutoff level, it simply routes to the parallel circuit, bypassing the zener, so the load would still see any voltage < 5V. At a low enough voltage, of course, the LM7805 doesn't conduct, so it kind of works out, but I'd like to know how to implement this.