I keep running across the term Input Impedance and yes, I do know what impedance is. But I seem to be missing some nuance of it. Op Amps have near infinite Input Impedance and, If I understand it correctly, higher Input Impedance has some affect on amplification. But just how does it do so? From my experience, higher resistance/impedance attenuates so how does a higher Input Impedance lead to greater amplification or just what am I missing here?

Affect of Input Impedance on Amplification

- Thread starter SamR

- Start date

Scroll to continue with content

Experience with what circuits?From my experience, higher resistance/impedance attenuates so how does a higher Input Impedance lead to greater amplification or just what am I missing here?

The circuit input impedance causes a voltage divider action with the source (signal) impedance. so a higher input impedance would reduce that attenuation.

Perhaps you are thinking about source impedance instead of input impedance(?).

Apparently, this is what I don't understand. Any Ohmic/resistive action, to me, indicates a drop in current which would lessen the input signal? From my radio and antenna perspective, the goal was to match impedance to prevent signal attenuation.The circuit input impedance causes a voltage divider action

The preamp has an output impedance, effectively the value of an imaginary resistor that is in series with an output which is a perfect resistor. In practice it is a protection resistor (say 220Ω) which is in series with the output of an op-amp. Let's assume that the output impedance is 220Ω.

The power amplifier that it is driving will have an input impedance. Normally it's a resistor across the input terminals. It serves two purposes, it provided DC bias current to the amplifier transistor, and it stops it going complete berserk and humming like crazy if there is no signal connected.

The output impedance of the preamp and the input impedance of the power amp form a potential divider. If they were equal then the signal at the input of the power amplifier would be reduced by half.

Normally the input impedance is large (say 22k), so it only loses about 1%.

Higher output impedance reduces the signal.

The power amplifier that it is driving will have an input impedance. Normally it's a resistor across the input terminals. It serves two purposes, it provided DC bias current to the amplifier transistor, and it stops it going complete berserk and humming like crazy if there is no signal connected.

The output impedance of the preamp and the input impedance of the power amp form a potential divider. If they were equal then the signal at the input of the power amplifier would be reduced by half.

Normally the input impedance is large (say 22k), so it only loses about 1%.

Higher output impedance reduces the signal.

Audioguru again

- Joined Oct 21, 2019

- 6,826

We do not match an output impedance to a load impedance in audio circuits because the voltage divider would reduce the signal level to half (-6dB).

Instead we use a low output impedance (of a preamp) feeding a high input impedance (of a power amplifier) so that there is no signal level loss.

Instead we use a low output impedance (of a preamp) feeding a high input impedance (of a power amplifier) so that there is no signal level loss.

No.the goal was to match impedance to prevent signal attenuation.

The goal was for maximum power transfer, such as for a transmitter, which occurs when the load impedance matches the source impedance.

The gives a voltage signal attenuation of 50%.

Lol I'm always way off in your topics. Good luck with your quest.Ok, found what I was looking for, the AC Voltage Divider rule. Need to brush up more on my AC theory...

Exactly, and any loss of power is signal attenuation.The goal was for maximum power transfer

Audioguru again

- Joined Oct 21, 2019

- 6,826

Not for DC and audio.Exactly, and any loss of power is signal attenuation (such as for a transmitter).

Maximum power transfer is needed with high frequency radio signals because a mismatch causes the signal to reflect off the mismatched distant end resulting is a loss of power at the antenna.

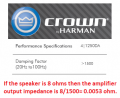

DC or AC audio signals do not reflect between the output of an amplifier and the load. Then a voltage divider attenuates the signal and heats the amplifier. The output impedance of audio power amplifiers is very low. A Crown amplifier that normally drives an 8 ohms speaker has an output impedance of 0.0053 ohms!

Attachments

-

29.8 KB Views: 3

tonyStewart

- Joined May 8, 2012

- 231

Matching impedance follows from the rule for achieving Maximum Power Transfer (MPT). For RF, impedance is controlled by aspect ratios of conductor lengths (L) and dielectric gaps. When mismatched in RF, wave reflections attenuate. For lower frequencies resistance or |impedance| ratios determine the attenuation which also applies to DC power supplies. If given the load regulation error, that is the load and source impedance ratio.Apparently, this is what I don't understand. Any Ohmic/resistive action, to me, indicates a drop in current which would lessen the input signal? From my radio and antenna perspective, the goal was to match impedance to prevent signal attenuation.

Last edited:

MisterBill2

- Joined Jan 23, 2018

- 27,407

What was neglected at the start of this thread is that all "real" voltage sources also have an internal resistance, or impedance, if they are AC sources. so with any real external load , which also has some internal resistance, or impedance, there is a voltage drop in both as any current flows. BUT if no current flows then impedance does not matter. And if any current that does flow is so little as to not have an effect that does not matter, (such as input circuits of audio stuff), then other considerations apply.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin