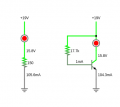

Consider the following two circuits featuring a ~100mA LED with a forward voltage of ~3.5V.

In both cases, roughly the same amount of heat is going to be generated by the LED. However in the first case, the power dissipation through the resistor is going to be ~1.7W versus the second circuit where only ~0.02W flows through it.

So it appears that a current source would clearly be the most efficient way to go about it (in this obviously contrived example, at least). Are there any caveats to such an approach?

In both cases, roughly the same amount of heat is going to be generated by the LED. However in the first case, the power dissipation through the resistor is going to be ~1.7W versus the second circuit where only ~0.02W flows through it.

So it appears that a current source would clearly be the most efficient way to go about it (in this obviously contrived example, at least). Are there any caveats to such an approach?

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin